Table of Contents

RHEV

Summary

We have have a RHEV instance running on hv{01..04} as the main hypervisor nodes. They're listed as Hosts in the RHEV Manager.

Currently the RHEV Hosts installed version is 4.3.5-1

The RHEV Manager is a Self-Hosted VM inside the cluster. The username for logging in is admin and the password is our standard root password.

The purpose of the cluster is to provide High Availability for our critical services such as OpenVPN and DNS.

Storage

Note: this was the original configuration. Storage is now provided by an iscsi service on the long-running cluster

Two new storage chassis are being used as the storage nodes. ssdstore{01,02}.front.sepia.ceph.com are populated with 8x 1.5TB NVMe drives in software RAID6 configuration.

A third host, senta01, is configured as the arbiter node for the Gluster volume. 2x 240GB SSD drives are in a software RAID1 and mounted at

/gluster.

Gluster

All VMs (except the Hosted Engine which is on the

hosted-engine volume) are backed by a sharded Gluster volume, ssdstorage. A sharded volume was chosen to decrease the time needed for the volume to heal after a storage failure. This should reduce VM downtime in the event of a storage node failure.

If there is a storage node failure, RHEV will use the remaining Gluster node and Gluster will automatically heal as part of the recovery process. It's possible a VM will be paused if its VM disk image changed while one of the storage nodes was down. Run gluster volume heal ssdstorage info to see heal status.

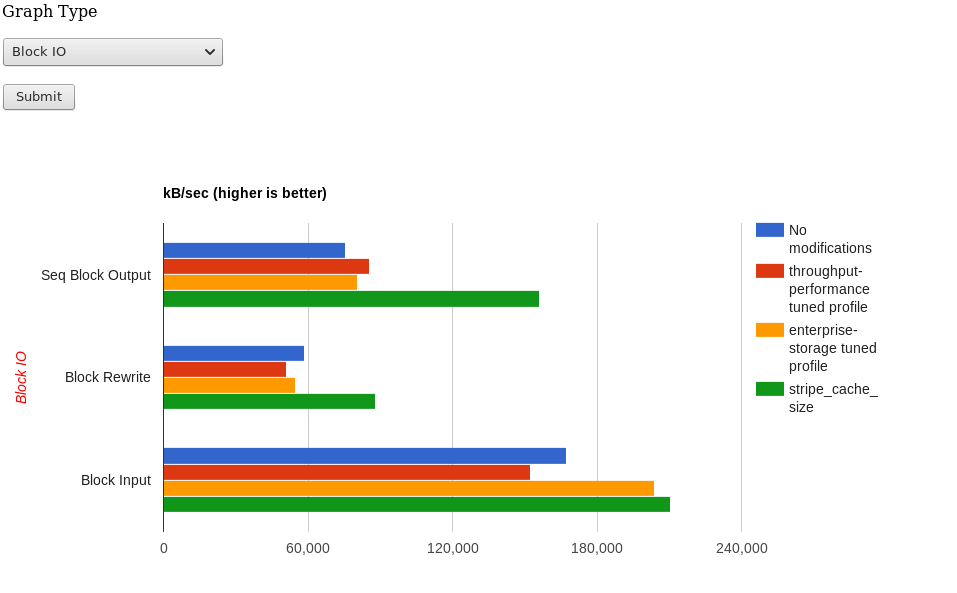

A single software RAID6 was decided upon as the most redundant and reliable storage configuration. See the graph below comparing the old storage as well as tests of various RAID5 and RAID6 configurations.

Backups

The HostedEngine VM (mgr01) has a crontab entry that backs up the RHV Manager every day. gitbuilder.ceph.com (gitbuilder-archive) pulls that backup file during its daily backup routine as long as mgr01 is reachable via the VPN tunnel.

If needed, the HostedEngine VM can be restored using one of these backup files. The backup includes a copy of the PostgreSQL database containing all metadata for the RHEV cluster.

Here's the cronjob that gets run on the RHEV-Manager VM

@daily rm -f /root/backups/backup* && engine-backup --mode=backup --scope=all --file=/root/backups/backup.tar.gz --log=/root/backups/backup.log

RHEV-Manager VM

If the HostedEngine VM dies, high availability of the RHEV VMs is lost. VMs will stay up, however. In other words, if the HostedEngine VM dies and a hypervisor host fails as well, the VMs that were running on the downed hypervisor will not automatically migrate to the other hypervisor.

The backend storage for the VM was moved from an NFS export on store01 to a separate gluster volume using ssdstore{01,02}.

Run hosted-engine --vm-status on any hypervisor to check if the VM is running.

Other Notes

The Hypervisors (hv{01..04}) and Storage nodes (ssdstore{01..02}) have entries in /etc/hosts in case of DNS failure.

Note: it is important that the version of glusterfs packages on the hypervisors does not exceed the version on the storage nodes (i.e. client is older or equal to server).

Creating New VMs

How-To

- Log in

- Go to the Virtual Machines tab

- Click New VM

- General Settings

- Cluster:

hv_cluster - Operating System:

Linux - Optimized for:

Server - Name: Whatever you want

- Descriptions are also nice

- System

- Memory Size: Up to you (it can take

4GBas input and will convert) - Total Virtual CPUs: Also up to you

- High Availability

- Highly Available: Checked (if desired)

- Set the Priority

- Boot Options

- Probably PXE then Hard Disk. This will boot to our Cobbler menu.

- You could also do CD-ROM then Hard Disk. Just check Attach CD and select the ISO (these are on

store01.front.sepia.ceph.com:/srv/isos/67ff9a5d-b5da-4a2f-b5ce-2286bc82e3e4/images/11111111-1111-1111-1111-111111111111if you want to add one)

- OK

- Now highlight your new VM

- At the bottom, Disks tab

- New

- Set the Size

- Storage Domain:

ssdstorage - Allocation Policy:

Preallocatedif IO performance is importantPreallocatedwill take longer to create the disk but IO performance in the VM will be fasterThin Provisionis almost immediate during VM creation but may slow down VM IO performance

- OK

- At the bottom, Network Interfaces tab

- New

- Profile should be

frontorwan(or both [one of them being on a second NIC] if desired). - OK

- Now power the VM up (green arrow) and open the console (little computer monitor icon)

- You can either

- Select an entry from the Cobbler PXE menu (if the new VM is NOT in the ansible inventory

- Make sure you press

[Tab]and delete almost all of the kickstart parameters (theks=most importantly)

- Add the host to the ansible inventory, and thus, Cobbler, DNS, and DHCP, then set a kickstart in the Cobbler Web UI (see below)

Using a Kickstart with Cobbler

The Sepia Cobbler instance has some kickstart profiles that will automate RHV VM installation. I think in order to use these, you'd have to get the MAC from the Network Interfaces tab in RHV, then put your new VM in the ceph-sepia-secrets ansible inventory.

Then run:

ansible-playbook cobbler.yml --tags systemsansible-playbook dhcp-server.ymlansible-playbook nameserver.yml --tags records

(See https://wiki.sepia.ceph.com/doku.php?id=tasks:adding_new_machines for more info)

In cobbler, you can browse to the system and set the Profile and Kickstart:

dgalloway-ubuntu-vm- Installs a basic Ubuntu installation using the entire disk andext4filesystem. I couldn't getxfsworking.dgalloway-rhel-vm- I don't remember if this one works but you can try.

A note about installing RHEL/CentOS

You need to specify the URL for the installation repo as a kernel parameter. So in the Cobbler PXE menu, when you hit [Tab], add ksdevice=link inst.repo=http://172.21.0.11/cobbler/ks_mirror/CentOS-X.X-x86_64 replacing X.X with the appropriate version.

Otherwise you'll end up with an error like dracut initqueue timeout and the installer dies.

ovirt-guest-agent

After installing a new VM, be sure to install VM guest agent. This, at the very least, allows a VM's FQDN and IP address(es) to show up in the RHEV Web UI.

git clone https://github.com/djgalloway/sepia.git cd ansible-playbooks ansible-playbook ovirt-guest-agent.yml --limit="$NEW_VM"

Migrating from Openstack to oVirt

Taken from https://docs.fuga.cloud/how-to-migrate-a-volume-from-one-openstack-provider-to-another

I think these steps are only applicable if the instance has a standalone volume for the root disk (which I try to do for most Openstack instances)

# Find UUID of the instance's root drive openstack server list # Create a snapshot of the volume openstack volume snapshot create --volume $UUID_OF_ROOT_VOLUME --force telemetry-snapshot # Create a separate *volume* from the snapshot openstack volume create --snapshot telemetry-snapshot --size 50 telemetry-volume # Create an image from the volume (this will take a long time) openstack image create --volume telemetry-volume telemetry-image # Download the image (this will take a long time) openstack image save --file snapshot.raw telemetry-image

Now proceed with the steps below.

Migrating libvirt/KVM disk to oVirt

Ubuntu

# Get the latest import-to-virt.pl from http://git.annexia.org/?p=import-to-ovirt.git;a=summary # From the baremetal host running the VM to migrate, apt-get install libxml-writer-perl libguestfs-perl nfs-common libguestfs-tools update-guestfs-appliance mkdir /export mount -o v3 store01.front.sepia.ceph.com:/srv/rhev_export /export import-to-ovirt.pl /path/to/disk.img /export # Follow instructions in import-to-ovirt.pl output # Once imported, adjust vCPUs and Memory as needed # Also, add a NIC. You can re-use the same MAC address

Fixes to VMs imported from Openstack

- In RHV, boot into a system rescue ISO (set the boot order for the VM to CD then HDD)

- Mount the root disk and modify

/etc/fstabif needed - Edit

/boot/grub/grub.cfgremoving anyconsole=lines from the default boot entry- (Make sure you make these changes persistent for subsequent reboots. See https://askubuntu.com/a/921830/906620, for example)

- Edit network config

- It's also probably beneficial to remove cloud-init: e.g.,

apt-get purge cloud-init- Even though cloud-init is purged, its grub.d settings still get read.

- It might work to just delete

/etc/default/grub.d/50-cloudimg-settings.cfgbut otherwise,- Modify it and get rid of any

console=parameters - Run

update-grub

Troubleshooting

/var/log/vdsm/vdsm.log is a useful place to check for errors on the hypervisors. To check for errors relating to the HostedEngine VM, see /var/log/ovirt-hosted-engine-ha/agent.log.

The vdsmd, ovirt-ha-agent, and ovirt-ha-broker services can be restarted on hypervisors without affecting running VMs.

Emergency RHEV Web UI Access w/o VPN

In the event the OpenVPN gateway VM is inaccessible/locked up/whatever, you can open an SSH tunnel (ssh -D 9999 $YOURUSER@8.43.84.133) and set your browser's proxy settings to SOCKS5 localhost:9999 to get at the RHEV web UI. That public IP is on store01 and is a leftover artifact from when store01 ran OpenVPN.

GFIDs listed in ''gluster volume heal ssdstorage info'' forever

This is https://bugzilla.redhat.com/show_bug.cgi?id=1361518.

As long as the unsynced entries are GFIDs only and they only appear under the arbiter (senta01) server, you can paste just the GFIDs into a /tmp/gfids file and run the following script:

#!/bin/bash

set -ex

VOLNAME=ssdstorage

for id in $(gluster volume heal $VOLNAME info | egrep '[0-9a-f]{8}-([0-9a-f]{4}-){3}[0-9a-f]{8}' -o); do

file=$(find /gluster/arbiter/.glusterfs -name $id -not -path '/gluster/arbiter/.glusterfs/indices/*' -type f)

if [ $(getfattr -d -m . -e hex $(echo $file) | grep trusted.afr.$VOLNAME* | grep "0x000000" | wc -l) == 2 ]; then

echo "deleting xattr for gfid $id"

for i in $(getfattr -d -m . -e hex $(echo $file) |grep trusted.afr.$VOLNAME*|cut -f1 -d'='); do

setfattr -x $i $(echo $file)

done

else

echo "not deleting xattr for gfid $id"

fi

done

Maintenance

Quirks Encountered

FIXED: See https://bugzilla.redhat.com/show_bug.cgi?id=1363926#c2

If the HostedEngine VM goes offline, it may not come back up. After a long night of troubleshooting, it was discovered that the XML for the VM stored on the NFS “rhevstor” export is invalid. To work around this error,

In case the RHEV-Manager VM fails to start for whatever reason, try this first:

hosted-engine --vm-start --vm-conf=/etc/ovirt-hosted-engine/vm.conf OR hosted-engine --vm-start --vm-conf=/var/run/ovirt-hosted-engine-ha/vm.conf

Updating Hypervisors

hv{01..04} are running production RHEL7 and registered with RHSM.

I used to have a summary of steps here but it's safer to just follow the Red Hat docs.

VM has paused due to no storage space error

We started seeing this issue on VMs like teuthology and it looks like it's a known bug I updated /etc/vdsm/vdsm.conf.d/99-local.conf and restarted systemctl restart vdsmd as described here:

Growing a VM's virtual disk

- Log into the Web UI

- Go to Virtual Machines tab

- Shut down the VM

- Highlight the VM then open the Snapshots tab at the bottom

- Create a snapshot in case anything goes wrong with growing the disk

- Under the Disks tab, highlight the disk and click Edit

- Update Extend size by(GB) and save

- Now you'll need to grow the partition

- Right-click the VM and click Edit

- Change the boot order under Boot Options, if necessary, to PXE then HDD

- (Or just boot to the gparted live CD and it'll handle growing partition and ISO for you)

- Bring the VM back up and open a console

- Choose inktank-rescue from the Cobbler PXE menu (Assumes not managed by Cobbler)

- Log in as root

- Follow instructions on https://access.redhat.com/articles/1190213

- If the filesystem is xfs instead of ext,

mount /dev/vda3 /mnt/vda3xfs_growfs /dev/vda3

- Reboot to the OS

If anything goes wrong, you can shut the VM down, select the snapshot you made, click Preview and boot back to it to revert your changes. Click Commit if you want to permanently revert to the snapshot.

Onlining Hot-Plugged CPU/RAM

https://askubuntu.com/a/764621

#!/bin/bash

# Based on script by William Lam - http://engineering.ucsb.edu/~duonglt/vmware/

# Bring CPUs online

for CPU_DIR in /sys/devices/system/cpu/cpu[0-9]*

do

CPU=${CPU_DIR##*/}

echo "Found cpu: '${CPU_DIR}' ..."

CPU_STATE_FILE="${CPU_DIR}/online"

if [ -f "${CPU_STATE_FILE}" ]; then

if grep -qx 1 "${CPU_STATE_FILE}"; then

echo -e "\t${CPU} already online"

else

echo -e "\t${CPU} is new cpu, onlining cpu ..."

echo 1 > "${CPU_STATE_FILE}"

fi

else

echo -e "\t${CPU} already configured prior to hot-add"

fi

done

# Bring all new Memory online

for RAM in $(grep line /sys/devices/system/memory/*/state)

do

echo "Found ram: ${RAM} ..."

if [[ "${RAM}" == *":offline" ]]; then

echo "Bringing online"

echo $RAM | sed "s/:offline$//"|sed "s/^/echo online > /"|source /dev/stdin

else

echo "Already online"

fi

done